Non Planar Projections

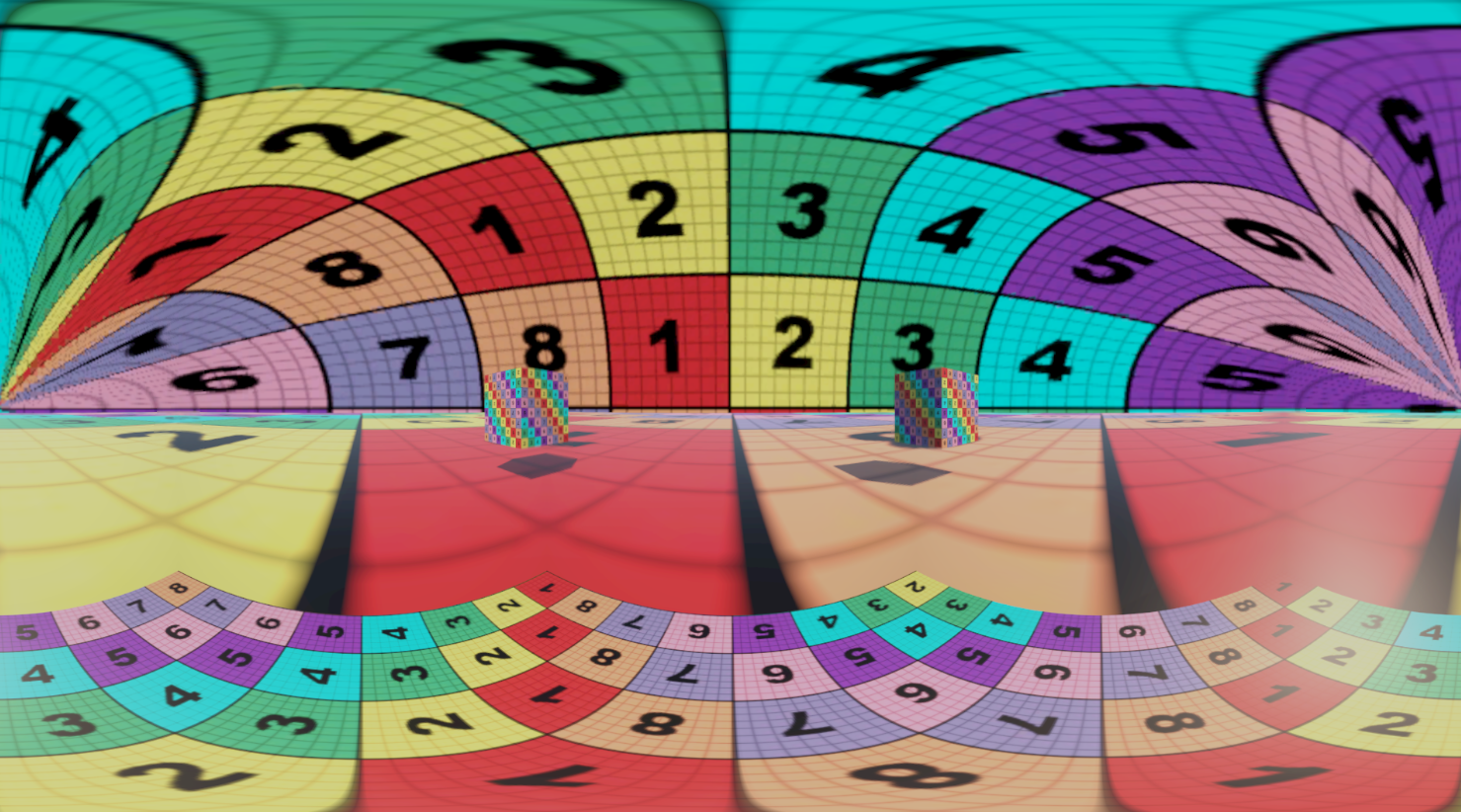

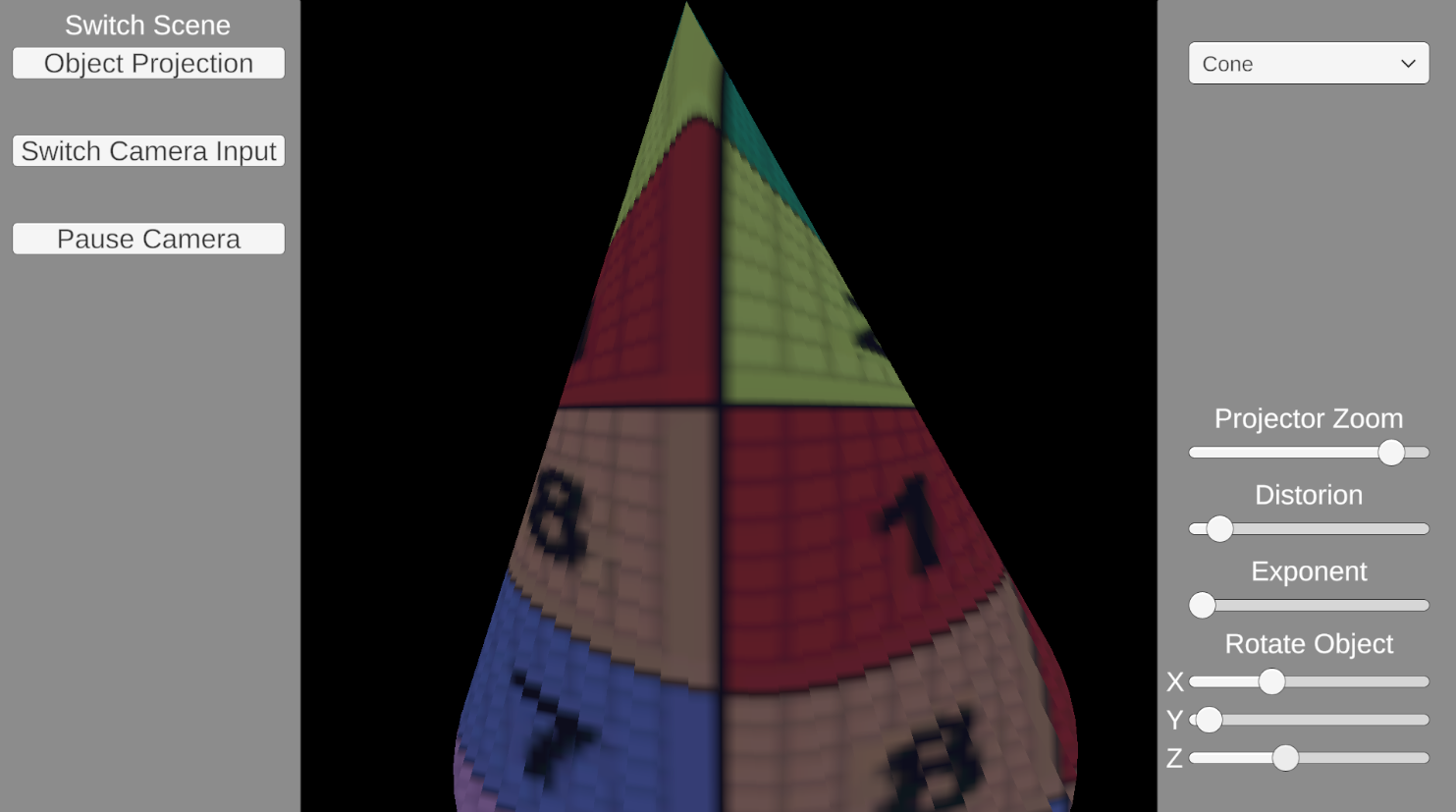

This project investigates the implementation of non planar projection techniques in Unity, focusing on spherical, conical, and cylindrical projections. Each projection model was implemented using two different approaches: projection applied onto a 3D object (Object based) and projection performed without an intermediary object (Object independent). This distinction enables a comparative evaluation of visual distortion and spatial continuity.

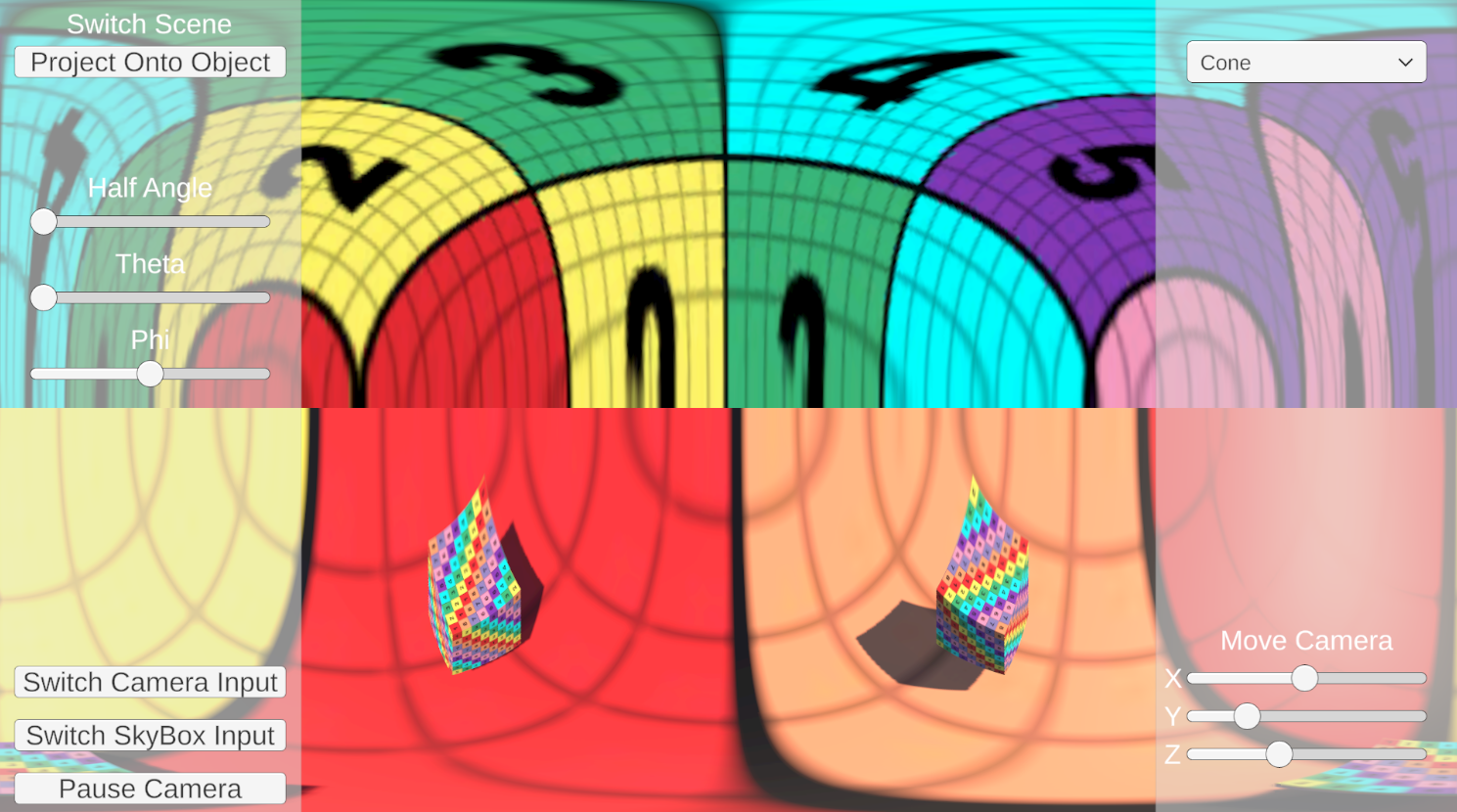

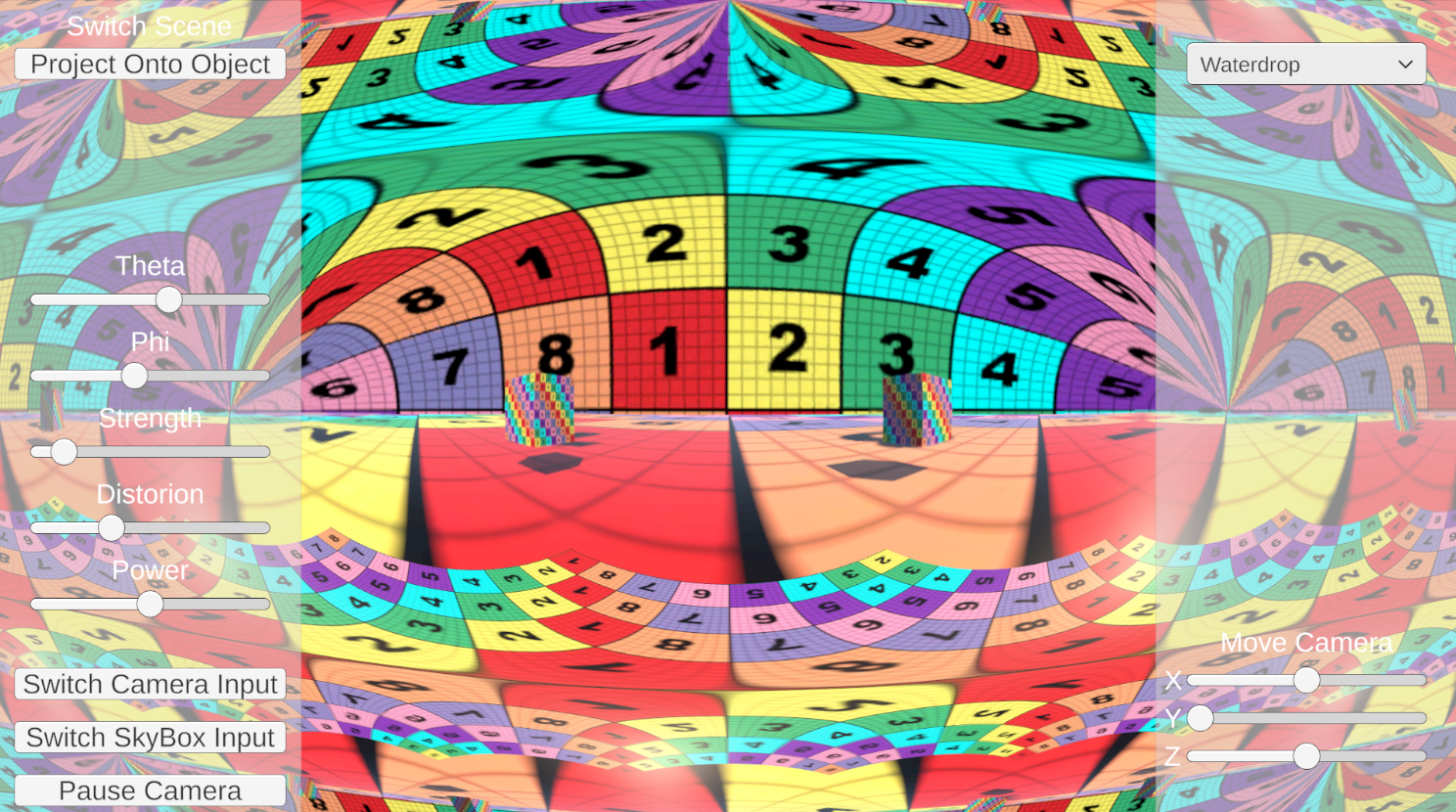

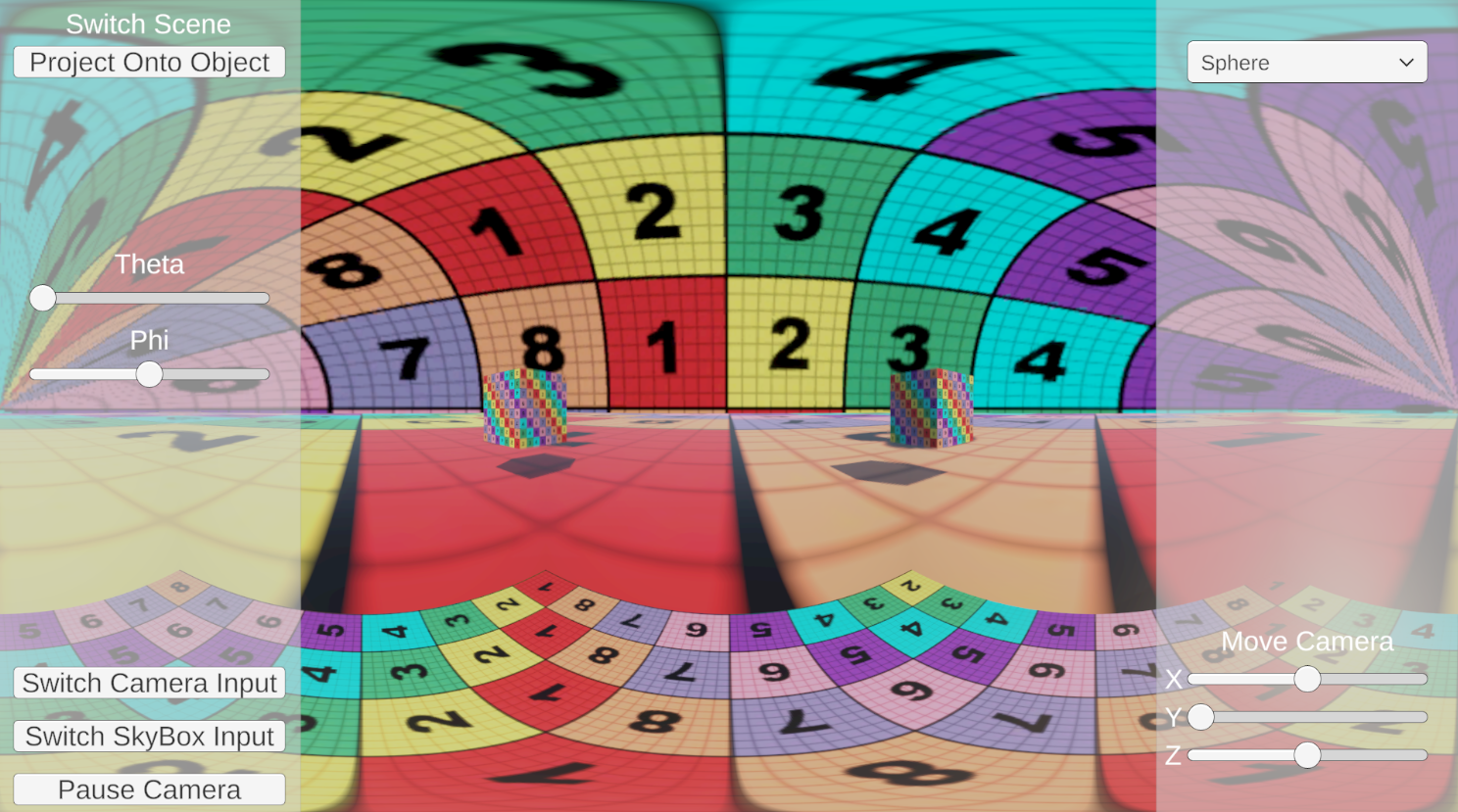

Each projection model was implemented using two distinct approaches. The first approach applies the projection onto a three dimensional geometric surface, referred to as the object based method, where the rendered image is mapped directly onto a corresponding 3D object (e.g., a sphere or cone). The second approach, termed object independent, performs the projection mathematically at the rendering stage without relying on an intermediary 3D object, instead modifying the projection calculations directly. By implementing both approaches for each projection type, the project enables a comparative analysis of their visual and spatial characteristics. The evaluation focuses on differences in visual distortion, spatial continuity, and overall image coherence across the projection methods and implementation strategies.

The first approach generates non planar projections directly in screen space using mathematical mapping rather than projecting onto an intermediary 3D object. In these shaders, each screen pixel’s UV coordinates are converted into a direction vector through spherical or conical mapping functions. The direction vector is then used to sample a cubemap texture representing the captured environment, producing an image that appears projected onto a curved surface. This method treats the projection as a purely mathematical transformation from 2D UV coordinates to 3D direction space, resulting in a projection that is independent of any target geometry and entirely defined by the projection model itself. The second approach projects a texture onto non planar 3D geometry using the object’s surface as an active component of the projection process. In this case, the image is mapped from a virtual projector located at a defined world position, with the projector’s view projection matrix determining how each point on the geometry is transformed into projector space. Pixels that fall outside the projector’s frustum or face away from the projector are discarded, ensuring the projection only appears on visible surface regions. Additionally, the shader applies geometry aware distortion based on the angle between the surface normal and the projector direction, producing a curved, fisheye like deformation that increases with grazing angles. This method therefore produces a projection that is inherently dependent on the shape and orientation of the target object.